After several months of hard work, we are proud to announce the release of version 2.2 of the Visual Basic Upgrade Companion. This version includes significant enhancements to the tool, including:

- Custom Maps: You can now define custom transformations for libraries that have somewhat similar interfaces. This should significantly speed up your migration projects if you are using third party controls that have a native .NET version or if you are already developing in .NET and wish to map methods from your VB6 code to your .NET code.

- Legacy VB6 Data Access Models: for version 2.2 we now support the transformation of ADO, RDO and DAO to ADO.NET. This data access migration is implemented using the classes and interfaces from the System.Data.Common namespace, so you should be able to connect to any database using any ADO.NET data provider.

- Support for additional third party libraries: We have enhanced the support for third party libraries, for which we both extended the coverage of the libraries we already supported and added additional libraries. The complete list can be found here.

- Plus hundreds of bug fixes and code generation improvements based on the feedback from our clients and partners!

You can get more information on the tool on the Visual Basic Upgrade Companion web page. You can also read about our migration services, which have helped many companies to successfully take advantage of their current investments in VB6 by moving their applications to the .NET Framework in record time!

Do you remember this classic bit, a pretty bad joke from the end of the movie "Coming to America":

"A man goes into a restaurant, and he sits down, he's having a bowl of soup and he says to the waiter: "Waiter come taste the soup."

Waiter says: Is something wrong with the soup?

He says: Taste the soup.

Waiter says: Is there something wrong with the soup? Is the soup too hot?

He says: Will you taste the soup?

Waiter says: What's wrong, is the soup too cold?

He says: Will you just taste the soup?!

Waiter says: Alright, I'll taste the soup - where's the spoon??

Aha. Aha! ..."

Well, the thing is that this week, when I read this column over at Visual Studio Magazine, this line of dialog was the first thing that came into my mind. First of all, we have the soup: according to the Support Statement for Visual Basic 6.0 on Windows®Vista™and Windows®Server 2008™, the Visual Basic 6.0 runtime support files will be supported until at least 2018 (Windows Server 2008 came out in 27 February 2008):

Supported Runtime Files – Shipping in the OS: Key Visual Basic 6.0 runtime files, used in the majority of application scenarios, are shipping in and supported for the lifetime of Windows Vista or Windows Server 2008. This lifetime is five years of mainstream support and five years of extended support from the time that Windows Vista or Windows Server 2008 ships. These files have been tested for compatibility as part of our testing of Visual Basic 6.0 applications running on Windows Vista.

Then, we have the spoon, taken from the same page:

The Visual Basic 6.0 IDE

The Visual Basic 6.0 IDE will be supported on Windows Vista and Windows Server 2008 as part of the Visual Basic 6.0 Extended Support policy until April 8, 2008. Both the Windows and Visual Basic teams tested the Visual Basic 6.0 IDE on Windows Vista and Windows Server 2008. This announcement does not change the support policy for the IDE.

So, even though you will be able to continue using your Visual Basic 6.0 applications, sooner or later you will need to either fix an issue found in one of them, or add new functionality that is required by your business. And when that day comes, you will face the harsh reality that the VB6.0 IDE is no longer supported. Even worst so, you have to jump through hoops in order to get it running. If you add the fact that we are probably going to see Windows 7 ship sooner rather than later, the prospect of being able to run your application but not fix it or enhance it in a supported platform becomes a real possibility. So make sure that you plan for a migration to the .NET Framework ahead of time - you don't want to hear anybody telling you "Aha. Aha!" if this were to happen.

I really never expected to be witness of the kind of events like those ones we are seeing nowadays. Certainly, we are experiencing a huge impact on our conception of society mainly driven by the adjustment of the economic system; probably an era is ending, a new one is about to born I cannot possibly know that for sure. I just hope for a better one.

Normally, I am a pessimistic character; and more than ever we have reasons for being so. However in this post, I want to be a little less than usually, but with prudence. I just needed to reorder my thoughts for mental health: I have been seeing so much C++ and Javascript and the like code during the last time.

I am neither economist nor sociologist, nothing even closer, just an IT old regular guy, needless to say. I just want to reflect here, quite informally, about the IT model and more exclusively the software model and its role in our society, under a context as the one we are currently experimenting. What is a "software model" by the way? I mean just here (by overloading): in a society we have state, political, economical and legal models, among others. Good or bad, less recognized as such or not, I think we also have invented a software model which at some important extent orchestrates the other historically better known models, let us call them (more) natural models.

I am remembering the 90s as the new millennium approached and many conjectures were made about the Y2K problem, especially concerning legacy software systems, and its potentially devastating costs and negative effects. For an instance, from here, let us take some quotes (it is worthy following the related articles pointed to):

"People have been sounding the alarm about the costs of the millennium bug--the software glitch that could paralyze computers come Jan. 1, 2000--for a couple of years. Now, the hard numbers are coming in and, if the pattern holds, they point to an even larger bill than many feared just a few months ago.

[...]

Outspoken Y2K-watcher Edward E. Yardeni, chief economist at Deutsche Bank Securities Inc., says the numbers show that some organizations are ''just starting'' to wake up to Y2K's potential for damage--but he believes the possible impact is enormous. In fact, Yardeni puts the chances of a recession in 2000 or 2001 at 70% because of ''a glitch in the flow of information"

Suddenly after reading that again, the old fashioned term "software crisis" (attributed to Bauer in 1968, popularized by Dijkstra seminal work in the seventies) we taught at college rooms seemed to make more sense than ever. But romanticism aside, we in IT know, software is still quite problematic for the same reasons since then. It is now a matter of size. In Dijkstra own words almost forty years ago (Humble Programmer ):

"To put it quite bluntly: as long as there were no machines, programming was no problem at all; when we had a few weak computers, programming became a mild problem, and now we have gigantic computers, programming has become an equally gigantic problem."

But those were surely different times; weren’t they? Exaggerated (as a source of businesses or even religious opportunities) or not, however, Y2K also gave us a serious (and global) warning about to what extent software had grown in many parts of our normal society model even in a time when the Web was not wired into the global business model as we have lived in the last decades, apparently.

I do not know whether final Y2K costs were as big as or even bigger than predicted but certainly the problem gave a huge impulse to investment in the software branch, I would guess. I am afraid, not always, leading to an improvement of the software model and practices. Those were the golden times that are probably ending now when appearance was frequently more valued than content and Artificial Intelligence became a movie.

What will be going on with the software model if the underlying economic model is now adjusting so dramatically and together with them the other more natural models, too? Will be short of capital for investment and consume stop or change (again) for worst software model evolution and development?

I do believe our software model remains essentially as bad as it was in the Y2K epoch. It is a consequence of its own abstract character living in a more and more "concrete" business world. I would further guess, it is even worst now for it was highly proliferated, it got more complicated structurally. Maintenance and formally understanding are now harder, among others, because dynamism, lack of standardization and because external functionality has tended to be more valued than maintainability and soundness. And exactly for that reason, I also believe (no matter how exactly the new economic model is going to look like) software will have to be stronger structurally and more reliable because after economic stabilization and restart, whenever, businesses will turn more strict to avoid just appearance once again be able to generate wealth.

I would expect (wish) software for effectively manipulating software (legacy and fresher, dynamic spaghetti included) should be more than ever demanded as a consequence. More effective testing and dynamic maintenance will be required during any adjustment phases of the new economical model. I also see standardization as a natural requirement and driver of a higher level of software quality. Platform independence will be more important than ever, I guess. No matter what agents become winners during adjustment and after recovering of the economic system, a better software model will be critical for each one of them, globally. Software as an expression of substantial knowledge will be in any case considered an asset under any circumstances. I think, we should be seeing a less speculative and consistent economical model where precisely a better software model really will make a discriminator for competing with real substance not with just fancy emptiness. The statement must be demonstrable.

Sometimes things do not result as bad as they appeared. Sometimes they result being even worst and, now, we do have to be prepared for such a scenario, absolutely. But, I also want to believe, it can also be an opportunity out there. I would like to think a software model improvement will be an essential piece of the economic transition and a transition to something better in software will be taking place, at the end. I wish it, at least.

I recommend reading Dijkstra again especially on these days just as an interesting historical comparison; and trying honestly not fooling myself, I quote him referring to his vision of a better software model, as we called here:

"There seem to be three major conditions that must be fulfilled. The world at large must recognize the need for the change; secondly the economic need for it must be sufficiently strong; and, thirdly, the change must be technically feasible."

I think, we might be having the first two of them. Concerning the third one, and slowly returning to the C++ code I am seeing, I just reshape his words: I absolutely fail to see how I could keep programs firmly within my intellectual grip, when this programming language escapes my intellectual control. But we have to, exactly.

So you now have a license of the Visual Basic Upgrade Companion, you open it up, and you don't know what now to do. Well, here are 5 simple steps you can follow to get the most out of the migration:

-

Read the "Getting Started" Guide: This is of course a no brainer, but well, we are all developers, and as such, we only read the manual when we reach a dead end ;). The Getting Started guide is installed alongside the Visual Basic Upgrade Companion, and you can launch it form the same program group in the start menu:

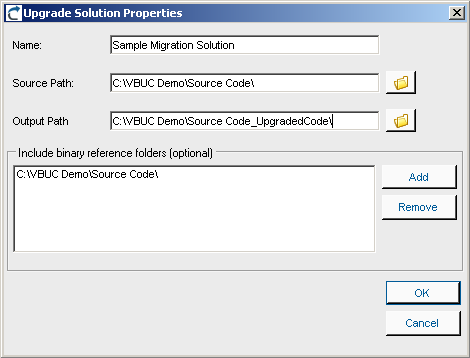

- Create a New Upgrade Solution: the first step for every migration will be to create a new VBUC Migration Solution. In it, you need to select the directory that holds your sources, select where you want the VBUC to generate the output .NET code, and the location of the binaries generated and use by the application. This last bit of information is very important, since the VBUC extracts information from the binaries in order to resolve the references between projects and create a VS.NET solution complete with references between the projects:

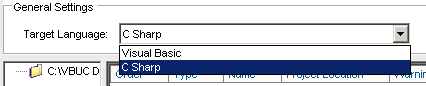

- Select the Target Language: One of the most significant advantages of using the Visual Basic Upgrade Companion is that it allows you to generate Visual Basic.NET or C# code directly. This can be selected using the combo box in the Upgrade Manager's UI:

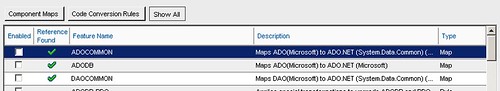

- Create a Migration Profile: The Visual Basic Upgrade Companion gives a large degree of control to the end users on how the .NET code will be generated. In addition to the option of creating either C# or VB.NET code, there are also a large amount of transformations that can be turned on/off using the profile manager. Starting with version 2.2, the Profile Manager recognizes the components used in the application and adds a green checkmark to the features that apply transformations to those components. This simplifies profile creation and improves the quality of the generated code from the start:

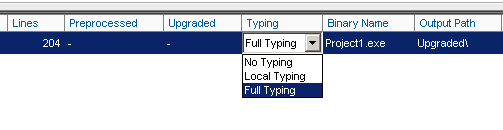

- Select the Code Typing Mechanism: the VBUC is able to determine the data type for variables in the VB6.0 code that are either declared without type or as Variant. To do this, for each project included in the migration solution you can select three levels of typing: Full Typing, Local Typing or No Typing. Full Typing is the most accurate typing mechanism - it infers the type for the variables depending on how they are used throughout the project. This means that it needs to analyze the complete source code in order to make typing decisions, which slows the migration process and has higher memory consumption. Local Typing also infers data types but only within the current scope of the variable. And No Typing leaves the variables with the same data types from the VB6.0 code:

After going through these 5 steps, you will have an Upgrade Solution ready to go that will generate close to optimal code. The next step is just to hit the "Start" button and wait for the migration to finish:

A few days ago we posted some new case studies to our site. These case studies highlight the positive impression that the capabilities of the Visual Basic Upgrade Companion leave on our customers when we do Visual Basic 6.0 to C# migrations.

The first couple of them deal with a UK company called Vertex. We did two migration projects with them, one for a web-based application, and another one for a desktop application. Vertex had a very clear idea of how they wanted the migrated code to look like. We added custom rules to the VBUC in order to meet their highly technical requirements, so the VBUC would do most of the work and speed up the process. Click on the links to read the case studies for their Omiga application or for the Supervisor application.

The other one deals with a Texas-based company called HSI. By going with us they managed to move all their Visual Basic 6.0 code (including their data access and charting components) to native .NET code. They estimate that by using the Visual Basic Upgrade companion they saved about a year in development time and a lot of money. You can read the case study here.

As profiled in a previous post we are building a tool for reasoning about CSS and related HTML styling tools using some logic framework (logic grammars in human readable style are usually highly ambiguous, consequently an interesting case study). So, we need a textual language, a DSL, for the tool, I was asked to try MGrammar (simply Mg), which a DSL generator which is part of the Microsoft Oslo SDK, recently presented in MS PDP.

Actually I am unaware of the Oslo project details , so I was a little bit skeptical about the thing and because it would entail learning another new language (M). But I fight against any personal preconception and decided just to take a look and try to have fun with it. I do not pretend here to blog about the whole Oslo project, of course. Just to tell a simple story about using Mg in its current state, no more.

In general, I like very much the idea that data specification (models) and data storing, and querying and the like can be separated from procedural languages in a declarative and yet more expressive way; hopefully, my own way to express my model (beyond standard diagrams and graphic perspectives). Oslo and M approach such the general vision on model oriented software development, Mg the latter on expressivity, as I understand the source material which is not much yet.

What I have seen so far of M is indeed very interesting, I really liked it; it extends LINQ which I consider a very interesting proposal MS’s; it also reminded me OCL in some ways. In fact, there exists an open source OCL library called Oslo, which has nothing to do with MS; it is just funny to mention.

Mg

I focused on tasting Mg; we do not consider M at all. We do not present a tutorial here, there is one nice for instance here. If you are interested in my example and sources please mail me and I will send you it back.

The SDK also contains a nice demo for a musical language (Song grammar) and its player in C#; and moreover M and Mg themselves are specified in Mg, the corresponding sources are available in the SDK. Hence, you learn by reading grammars, because the documentation is not yet as rich as I would like.

Mg source files resemble, at least in principle, other parser generators (like ANTLR, Coco, JavaCC, YACC/Lex and relatives). I do not know details about the parsing technique behind Mg. However, according to the mg.exe reporting it would be LR-parsing.

Mg offers really powerful features. Just as an instance, following are tokenizing rules:

token Digit = "0".."9";

token Digits = Digit+;

token Sign = ("+"|"-");

token Number = Sign?Digits ("." Digits)?;

Or constraint the repetition factor (from at least 1 maximum 10) as in:

token ANotTooLongNumber = Digit#1..10;

But you may have parameters in scanner rules, thus you may write (taken from nice tutorial here):

token QuotedText(Q) = Q (^('\n' | '\r') - Q)* Q;

token SingleQuotedText = QuotedText('"');

token DoubleQuotedText = QuotedText("'");

For handling different types of quotations depending on parameter Q! Notice the subpattern ^('\n' | '\r') – Q. it means any but newline or return excluding character Q.

Oslo SDK provides an editor called Intellipad, which can be used for both M and Mg development. You need to experiment a little bit before getting used with it, but, after short time, it appears to you as a very nice tool. Actually, a command-line compiler for Mg is included in the SDK, so you may edit your grammar using any text editor and compile using a shell. But, the Intellipad is quite powerful, it allows editing and debugging your grammar simultaneously, quickly; Intellipad shows you several panes for working with, in one you write your grammar, in other you may enter input to your grammar which is immediately checked against your rules. A third (tree view) pane shows you the AST being projected by your grammar on the input you are providing.

Finally, a forth pane shows you the error messages that includes those eventual parsing ambiguities according to the given input and grammar. Interesting, only then, you notice any potential conflict. I did not see any form of static analysis for warning about such cases provided in the editor. Is there any such?

Besides that, actually I enjoyed using it. I a not sure to appreciate the whole functionality but it was quite easy to create my grammar. Mg is modular, as M is. So you can organize and reuse your grammar parts. That is very nice.

To start defining a language, you write something like:

language LogicFormulae{

//rules go here inside

}

For defining the language you have "token", "syntax" mainly and other statements. In this case, the name "LogicFormulae" will be used later on when we access the corresponding parser programmatically.

Case Study

Back to our case study, our simple language contains logic formulae like "p&(p->q) <-> p&q" (called well-formed-formulae, or wff) where as usual a lot of ambiguity (conflicts) might occur. The result is frequently that you have to rewrite your grammar into a usually uglier one in order to recover determinism and so fun is out. What I liked of Mg is a very readable style for handling such cases. For instance, I have a rule like

syntax ComposedWff =

precedence 1: ParenthesisWff

| precedence 1: PossibilityWff

| precedence 1: NecessityWff

| precedence 1: QuantifiedWff

| precedence 2: NotWff

| precedence 3: AndWff

| precedence 4: ImpliesWff

| precedence 4: EquivWff

| precedence 5: OrWff

;

Using the keyword precedence I can "reorder" the rule to avoid ambiguity. Thus, "all x.p & q" parses as "(all x.p) & q" under this rule because of the precedence I chose.

Another example shows you how to deal with associativity and operator precedence

syntax AndWff = Wff left(4) "&" Wff;

syntax ImpliesWff = Wff right(3) "->" Wff;

Mg projects syntax in its D-Graphs using the name of the rule and token images automatically, thus it always generate an AST without specifying it which is very useful. Thus, the "AndWff" rule will produce a tree with three children (including the token "&"), labeled with "AndWff". You can use your own constructors to be produced, instead:

syntax AndWff = x:Wff left(4) "&" y:Wff => And[x, y];

Such constructors like "And" need not to be classes they will be labels in nodes.

Conflicts

Conflicts were in appearance solved by this way because the engine behind Intellipad did not complain anymore about my test cases. I do not know whether there is a way to verify the grammar using Intellipad, so I just assumed it was conflicts free. However, when I compiled it using the mg.exe (using the option -reportConflicts) I got a list of warnings as by any LR parser generator. That was disappointing. Are we seeing different parsing engines?

Using C#

The generated parser and the graphs it produces can be accessed programmatically, something that was of my special interest in my case beyond working with Intellipad. Because I was not able to create a parser image directly from Intellipad, I did the following: I compiled my grammar using the mg.exe command producing a so-called image, a binary file (other targets are possible, I think) called "mgx". This mgx-image can be loaded in C# project using some libraries of the SDK. (It can also be executed using mgx.exe). You would load the image like this:

parser = MGrammarCompiler.LoadParserFromMgx(stream, languageName);

where "stream" would be a stream referring to the mgx file I produced with the mg compiler. And "languageName" is a string indicating the language I want to use for parsing ("LogicFormulae" in my case). And I parse any file as indicated by string variable "input" as follows

rootNode = parser.ParseObject(input, ErrorReporter.Standard);

The variable rootNode (of type object, by the way!) refers to root of the parsing graph. A special kind of object of class GraphBuilder gives you access to the nodes, node labels and node children if any. Hence, using such an object you visit the graph as usual. For instance using a pattern like:

void VisitAst(GraphBuilder builder, object node){

foreach (object childNode in builder.GetSequenceElements(node))

if (childNode is string)

VisitValue(childNode);

else VisitAst(builder, childNode);

}

For some reason, the library uses object as node type, as you are noticing, probably.

Conclusions

I found Mg very powerful and working with Intellipad for prototyping was actually fun. Well it was almost always. I only have some doubts concerning use and performance. For instance, using Intellipad you are able to develop and test very fast. But it seems that there is no static analysis tool inside Intellipad for warning about eventual conflicts in the grammar.

The other unclear thing I saw is the loading time of the engine in C#. It takes really too long before you get the object parser given the mgx file. In fact, the mg compiler seems to work too slowly, in particular with respect to Intellipad. It seems as though the mg.exe and Intellipad were not connected as tool chain or something like that. But surely is just me, because it is just my first contact with this SDK.

In Visual Basic 6.0 you can specify optional parameters in a function or sub signature. This, however, isn't possible in .NET. In order to migrate code that uses this optional parameters, the Visual Basic Upgrade Companion creates different overload methods with all the possible combinations present in the method's signature. The following example will further explain this.

Take this declaration in VB6:

Public Function OptionSub(Optional ByVal param1 As String, Optional ByVal param2 As Integer, Optional ByVal param3 As String) As String

OptionSub = param1 + param2 + param3

End Function

In the declaration, you see that you can call the function with 0, 1, 2 or 3 parameters. When running the code through the VBUC, you will get four different methods, with the different overload combinations, as shown below:

static public string OptionSub( string param1, int param2, string param3)

{

return (Double.Parse(param1) + param2 + Double.Parse(param3)).ToString();

}

static public string OptionSub( string param1, int param2)

{

return OptionSub(param1, param2, "");

}

static public string OptionSub( string param1)

{

return OptionSub(param1, 0, "");

}

static public string OptionSub()

{

return OptionSub("", 0, "");

}

The C# code above doesn't have any change applied to it after it comes out of the VBUC. There may be other ways around this problem, such as using parameter arrays in C#, but that would be more complex in scenarios like when mixing different data types (as seen above).

Right now we are entering the final stages of testing for the release of the next version of the Visual Basic Upgrade Companion (VBUC). This release is scheduled for sometime within the next month.

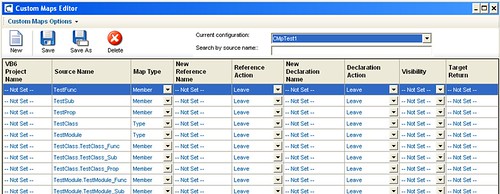

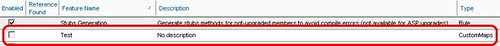

One of the most exiting new features of this new release is the addition of Custom Maps. Custom Maps are simple translation rules that can be added to the VBUC so they are applied during the automated migration. This allows end users to fine tune specific mappings so that they better suit a particular application. Or you can actually create mappings for third party COM controls that you use, as long as the APIs for the source and target controls are similar.

The VBUC will include an interface for you to edit these maps:

Also, once you create the map, you can select them using the Profile Editor:

Custom Maps add a great deal of flexibility to the tool. Even though only simple mappings are allowed (simple as in one-to-one mappings, currently it doesn't allow you to add new code before or after the mapped element), this feature allows end users edit, modify or delete any transformation included, and to add your own. By doing this, you can have the VBUC do more work in an automated fashion, freeing up developer's time and speeding up the migration process even more.

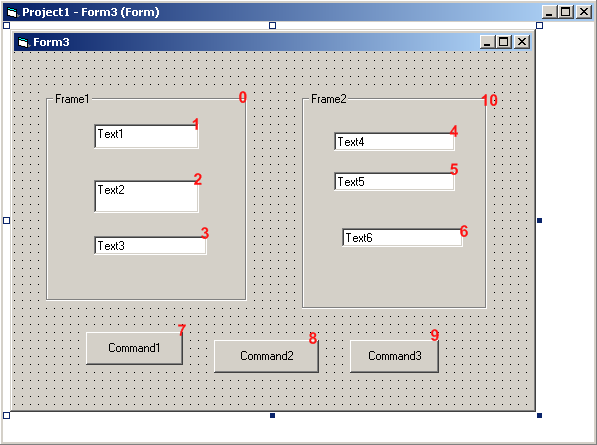

While working on a migration project recently, we found a very particular behavior of the TabIndex property when migrating from Visual Basic 6.0 to .NET. It is as follows:

In VB6, we have the following form: (TabIndexes in Red)

Note that TabIndex 0 and 10 correspond to the Frames Frame1 and Frame2. If you stand on the textbox Text1, and start pressing the tab key, it will go through all the controls in the following order (based on the TabIndex): 1->2->3->4->5->6->-7>8->9.

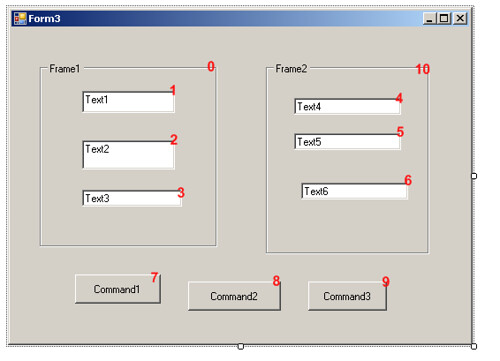

After the migration, we have the same form but in .NET. We still keep the same TabIndex for the components, as shown below:

In this case TabIndex 0 and 10 correspond to the GroupBox Frame1 and Frame2. When going through the control in .NET, however, if you start pressing the tab key, it will use the following order: 1->2->3->7>8->9->4->5->6. As you can see, it first goes through the buttons (7, 8 and 9) instead of going through the textboxes. This requires an incredibly easy fix (just changing the TabIndex on the GroupBox) to replicate the behavior from VB6, but I thought it would be interesting to throw this one out there. This is one of the scenarios where there is not much that the VBUC can do (it is setting the properties correctly on the migration). It is just a difference in behavior between VB6 and .NET for which a manual change IS necessary.

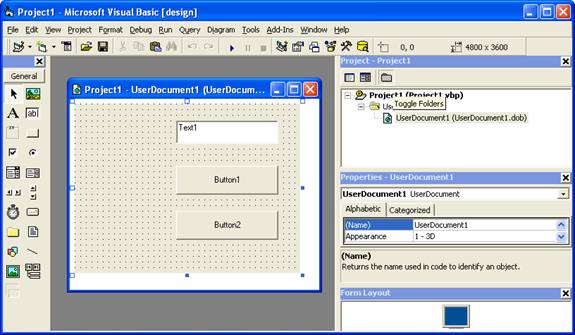

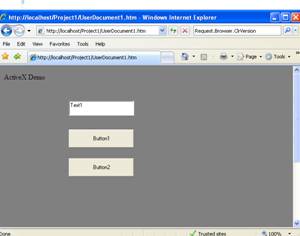

This post describes an an interesting workaround that you can use to support the migration of ActiveX Documents with the Artinsoft Visual Basic Upgrade Companion which is one of the Artinsoft \ Mobilize.NET tools you can use to modernize your Visual Basic, Windows Forms and PowerBuilder applications.

Currently the Visual Basic Upgrade Companion does not allow you to process ActiveX Document directly, but there is a workaround: in general ActiveX Document are something really close to an User Control which is a element that is migrated automatically by the Visual Basic Upgrade Companion.

This post provides a link to a tool (DOWNLOAD TOOL) that can fix your VB6 projects, so the Visual Basic Upgrade Companion processes them. To run the tool:

1) Open the command prompt

2) Go to the Folder where the .vbp file is located

3) Execute a command line command like:

FixUserDocuments Project1.vbp

This will generate a new project called Project1_modified.vbp. Migrate this new project and now UserDocuments will be supported.

First Some History

VB6 allows you to create UserDocuments, which can be embedded inside an ActiveX container. The most common one is Internet Explorer. After compilation, the document is contained in a Visual Basic Document file (.VBD) and the server is contained in either an .EXE or .DLL file. During development, the project is in a .DOB file, which is a plain text file containing the definitions of the project’s controls, source code, and so on.

If an ActiveX document project contains graphical elements that cannot be stored in text format, they will be kept in a .DOX file. The .DOB and .DOX files in an ActiveX document project are parallel to the .FRM and .FRX files of a regular Visual Basic executable project.

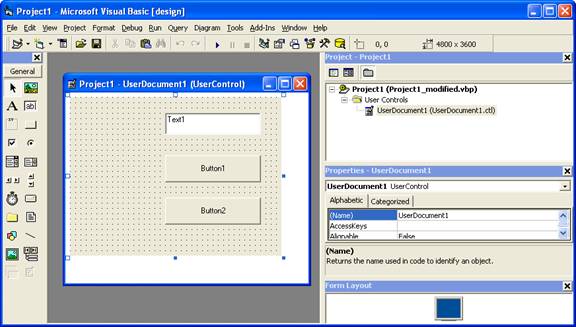

The trick to support ActiveX documents is that in general they are very similar to UserControls, and .NET UserControls can also be hosted in a WebBrowser. The following command line tool can be used to update your VB6 projects. It will generate a new solution where UserDocuments will be defined as UserControls.

If you have an ActiveX document like the following:

Then after running the tool you will have an Project like the following:

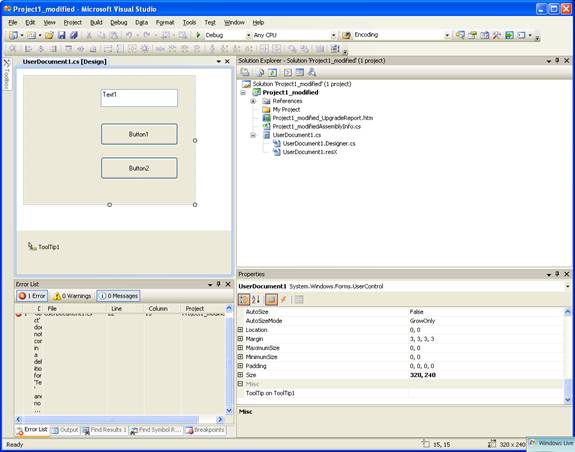

So after you have upgraded the projet with the Fixing tool, open the Visual Basic Upgrade Companion and migrate your project.

After migration you will get something like this:

To use your migrated code embedded in a Web Browser copy the generated assemblies and .pdb to the directory you will publish:

Next create an .HTM page. For example UserDocument1.htm

The contents of that page should be something like the following:

|

<html>

<body>

<p>ActiveX Demo<br> <br></body>

<object id="UserDocument1"

classid="http:<AssemblyFileName>#<QualifiedName of Object>"

height="500" width="500" VIEWASTEXT>

</object>

<br><br>

</html>

For example:

<html>

<body>

<p>ActiveX Demo<br> <br></body>

<object id="UserDocument1"

classid="http:Project1.dll#Project1.UserDocument1"

height="500" width="500" VIEWASTEXT>

</object>

<br><br>

</html>

|

Now all that is left is to publish the output directory.

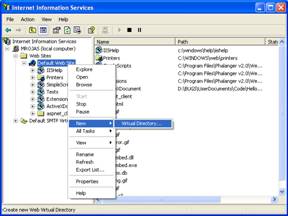

To publish your WinForms user control follow these steps.

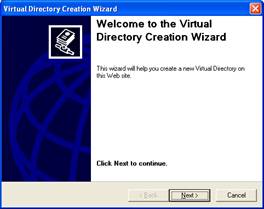

- Create a Virtual Directory:

- A Wizard to create a Virtual Directory will appear.

Click Next

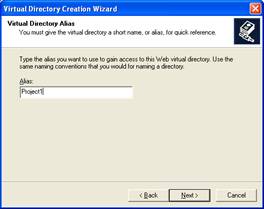

Name the directory as you want. For example Project1. Click Next

Select the location of your files. Click the Browse button to open a dialog box where you can select your files location. Click Next

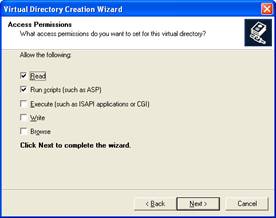

Check the read and run scripts checks and click next

Now Click Finish

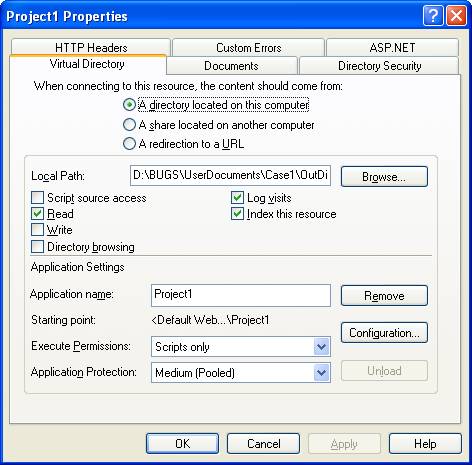

- Properties for the Virtual Directory will look like this:

NOTE: to see this dialog right click over the virtual directory

- Now just browse to the address lets say http:\\localhost\Project1\UserDocument1.htm

And that should be all! :)

The colors are different because of the Host configuration however a simple CSS like:

<style>

body {background-color: gray;}

</style>

Can make the desired change:

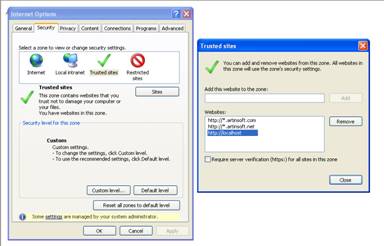

Notice that there will be security limitations, for example for thinks like MessageBoxes.

You can allow restricted operations by setting your site as a restricted site:

For example:

Restrictions

The constraints for this solution include:

* This solutions requires Windows operating system on the client side

* Internet Explorer 6.0 is the only browser that provides support for this type of hosting

* It requires .NET runtime to be installed on the client machine.

* It also requires Windows 2000 and IIS 5.0 or above on the server side

Due to all of the above constraints, it might be beneficial to detect the capabilities of the client machine and then deliver content that is appropriate to them. For example, since forms controls hosted in IE require the presence of the .NET runtime on the client machine, we can write code to check if the client machine has the .NET runtime installed. You can do this by checking the value of the Request.Browser.ClrVersion property. If the client machine has .NET installed, this property will return the version number; otherwise it will return 0.0.

Adding a script like:

<script>

if ((navigator.userAgent.indexOf(".NET CLR")>-1))

{

//alert ("CLR available " +navigator.userAgent);

}

else

alert(".NET SDK/Runtime is not available for us from within " + "your web browser or your web browser is not supported." + " Please check with http://msdn.microsoft.com/net/ for " + "appropriate .NET runtime for your machine.");

</script>

Will help with that.

References:

ActiveX Documents Definitions:

http://www.aivosto.com/visdev/vdmbvis58.html

Hosting .NET Controls in IE

http://www.15seconds.com/issue/030610.htm

Today

Eric Nelson covered the

quasi-legendary legacy transformation options graph on his blog.

Taking into account the 4 basic alternatives for legacy renovation, that is, Replace, Rewrite, Reuse or Migrate,

this diagram shows the combination of 2 main factors that might lead to these

options: Application Quality and Business Value. As Declan Good

mentioned in his “Legacy

Transformation” white paper, Application Quality refers to “the suitability

of the legacy application in business and technical terms”, based on parameters

like effectiveness, functionality, stability of the embedded business rules,

stage in the development life cycle, etc. On the other hand, Business Value is

related to the level of customization, that is, if it’s a unique, non-standard

system or if there are suitable replacement packages available.

This

diagram represents the basic decision criteria, but there are other issues that

must be considered, specifically when evaluating VB to .NET upgrades. For

example, as Eric mentions in his blog post, a lot of manual rewrite projects

face so many problems that end up being abandoned. One of ArtinSoft’s recent

customers, HSI,

went for the automated migration approach after analyzing the implications of a

rewrite from scratch. They just couldn’t afford the time, cost and disruption involved. As Ryan Grady, owner of the

company in charge of this VB to .NET migration project for HSI puts it, “very quickly we realized that upgrading the

application gave us the ability to have something already and then just improve

each part of it as we moved forward. Without question, we would still be working

on it if we’d done it ourselves, saving us up to 12 months of development time

easily”. Those 12 months translated into a US$160,000 saving for HSI! (You

can read the complete case

study at ArtinSoft’s website.)

On

the other hand, for some companies reusing (i.e. wrapping) their VB6

applications to run on the .NET platform is simply not an option, no matter

where it falls in the aforementioned chart. For example, there are strict regulations in the Financial and

Insurance verticals that deem keeping critical applications in an environment

that’s no longer officially supported simply unacceptable. Besides, sometimes

there’s another drawback to this alternative: it adds more elements to be

maintained, two sets of data to be kept synchronized and requires for the

programmers to switch constantly between 2 different development environments.

Therefore,

an assessment of a software portfolio before deciding on a legacy transformation

method must take into account several factors that are particular to each case,

like available resources, budget, time to market, compliance with regulations,

and of course, the specific goals you want to achieve through this application

modernization project.

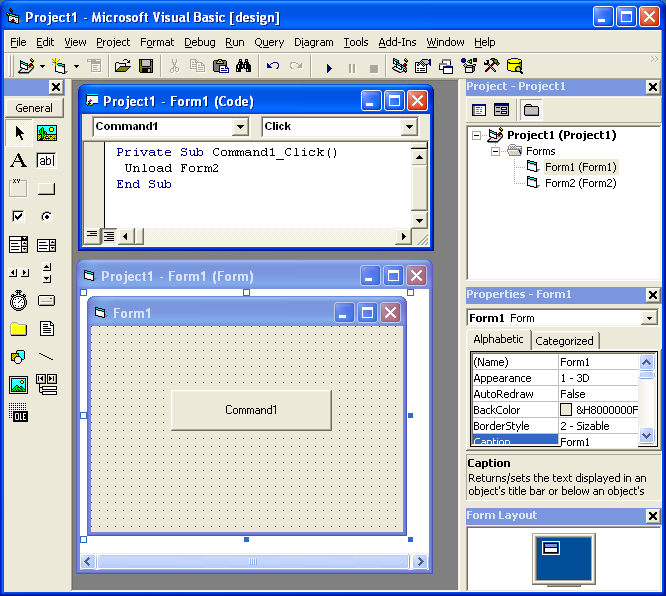

During migratio of a simple project, we found an interesting migration details.

The solution has a project with two Forms. Form1 and Form2. Form1 has a command button and in the Click for that command button it performs a code like UnLoad Form2.

But it could happen that Form2 has not been loaded but in VB6 it is not a problem. In .NET the code will be something like form2.Close() and it could cause problems.

A possible fix is to add some flag that indicates if the form was instanciated and the call the event.

Recenlty following a post in an AS400 Java Group, someone asked about a method for signing and verifying a file with PGP.

I though, "Damn, that seems like a very common thing, it shouldn't be that difficult", and started google'ing for it.

I found as the poster said that the Bouncy Castle API can be used but it was not easy.

Well so I learned a lot about PGP and the Bouncy Castle and thanks god, Tamas Perlaky posted a great sample that signs a file, so I didn't have to spend a lot of time trying to figure it out.

I'm copying Tamas Post because, I had problems accesing the site so here is the post just as Tamas published it:

"To build this you will need to obtain the following depenencies. The Bouncy Castle versions may need to be different based on your JDK level.

bcpg-jdk15-141.jar

bcprov-jdk15-141.jar

commons-cli-1.1.jar

Then you can try something like:

java net.tamas.bcpg.DecryptAndVerifyFile -d test2_secret.asc -p secret -v test1_pub.asc -i test.txt.asc -o verify.txt

And expect to get a verify.txt that's the same as test.txt. Maybe.

Here’s the download: PgpDecryptAndVerify.zip"

And this is the original link: http://www.tamas.net/Home/PGP_Samples.html

Thanks a lot Tamas

I had to access an old VSS database and nobody remember any password or the admin password.

The tool from this page http://not42.com/2005/06/16/visual-source-safe-admin-password-reset

This was a lifesaver!

If you have heavy processes in Coldfusion a nice thing is to track them with the JVMSTAT tool.

You can get the JVMStat tool from http://java.sun.com/performance/jvmstat/

And this post shows some useful information of how to use the tool with Coldfusion

http://www.petefreitag.com/item/141.cfm

Just more details about scripting

Using the MS Scripting Object

The MS Scripting Object can be used in .NET applications. But it has several limitations.

The main limitation it has is that all scripted objects must be exposed thru pure COM. The scripting object is a COM component that know nothing about .NET

In general you could do something like the following to expose a component thru COM:

[System.Runtime.InteropServices.ComVisible(true)]

public partial class frmTestVBScript : Form

{

//Rest of code

}

NOTE: you can use that code to do a simple exposure of the form to COM Interop. However to provide a full exposure of a graphical component like a form or user control you should use the Interop Form ToolKit from Microsoft http://msdn.microsoft.com/en-us/vbasic/bb419144.aspx

To expose an object in COM. But most of the properties and methods in a System.Windows.Forms.Form class, use native types instead of COM types.

As you could see in the Backcolor property example:

public int MyBackColor

{ get { return System.Drawing.ColorTranslator.ToOle(this.BackColor); } set { this.BackColor = System.Drawing.ColorTranslator.FromOle(value); }}

Issues:

- The problem with properties such as those is that System.Drawing.Color is not COM exposable.

- Your script will expect an object exposing COM-compatible properties.

- Another problem with that is that there might be some name collision.

Using Forms

In general to use your scripts without a lot of modification to your scripts you should do something like this:

- Your forms must mimic the interfaces exposed by VB6 forms. To do that you can use a tool like OLE2View and take a look at the interfaces in VB6.OLB

- Using those interfaces create an interface in C#

- Make your forms implement that interface.

- If your customers have forms that they expose thru com then if those forms add new functionality do this:

- Create a new interface, that extends the basic one you have and

I’m attaching an application showing how to to this.

Performing a CreateObject and Connecting to the Database

The CreateObject command can still be used. To allow compatibility the .NET components must expose the same ProgIds that the used.

ADODB can still be used, and probably RDO and ADO (these last two I haven’t tried a lot)

So I tried a simple script like the following to illustrate this:

Sub ConnectToDB

'declare the variable that will hold new connection object Dim Connection

'create an ADO connection object Set Connection=CreateObject("ADODB.Connection")

'declare the variable that will hold the connection stringDim ConnectionString

'define connection string, specify database driver and location of the database ConnectionString = "Driver={SQL Server};Server=MROJAS\SQLEXPRESS;Database=database1;TrustedConnection=YES"'open the connection to the database

Connection.Open ConnectionString

MsgBox "Success Connect. Now lets try to get data"

'declare the variable that will hold our new object Dim Recordset

'create an ADO recordset object

Set Recordset=CreateObject("ADODB.Recordset")

'declare the variable that will hold the SQL statement Dim SQL

SQL="SELECT * FROM Employees"

'Open the recordset object executing the SQL statement and return records

Recordset.Open SQL, Connection

'first of all determine whether there are any records

If Recordset.EOF Then

MsgBox "No records returned."

Else

'if there are records then loop through the fields Do While NOT Recordset.Eof

MsgBox Recordset("EmployeeName") & " -- " & Recordset("Salary")

Recordset.MoveNext

Loop

End If MsgBox "This is the END!"

End Sub

|

I tested this code with the sample application I’m attaching. Just paste the code, press Add Code, then type ConnectToDB and executeStatement

I’m attaching an application showing how to do this. Look at extended form. Your users will have to make their forms extend the VBForm interface to expose their methods.

Using Events

Event handling has some issues.

All events have to be renamed (at least this is my current experience, I have to investigate further, but the .NET support for COM Events does a binding with the class names I think there’s a workaround for this but I still have not the time to test it).

In general you must create an interface with all events, rename then (in my sample I just renamed them to <Event>2) and then you can use this events.

You must also add handlers for .NET events to raise the COM events.

#region "Events"

public delegate void Click2EventHandler();

public delegate void DblClick2EventHandler();

public delegate void GotFocus2EventHandler();

public event Click2EventHandler Click2;

public event DblClick2EventHandler DblClick2;

public event GotFocus2EventHandler GotFocus2;

public void HookEvents()

{

this.Click += new EventHandler(SimpleForm_Click);

this.DoubleClick += new EventHandler(SimpleForm_DoubleClick);

this.GotFocus += new EventHandler(SimpleForm_GotFocus);

}

void SimpleForm_Click(object sender, EventArgs e)

{

if (this.Click2 != null)

{

try

{

Click2();

}

catch { }

}

}

void SimpleForm_DoubleClick(object sender, EventArgs e)

{

if (this.DblClick2 != null)

{

try

{

DblClick2();

}

catch { }

}

}

void SimpleForm_GotFocus(object sender, EventArgs e)

{

if (this.GotFocus2 != null)

{

try

{

GotFocus2();

}

catch { }

}

}

#endregion Alternative solutions

Sadly there isn’t currently a nice solution for scripting in .NET. Some people have done some work to implement something like VBScript in .NET (including myself as a personal project but not mature enough I would like your feedback there to know if you will be interesting in a managed version of VBScript) but currently the most mature solution I have seen is Script.NET. This implementation is a true interpreter. http://www.codeplex.com/scriptdotnet Also microsoft is working in a DLR (Dynamic Languages Runtime, this is the runtime that I’m using for my pet project of VBScript)

The problem with some of the other solutions is that they allow you to use a .NET language like CSharp or VB.NET or Jscript.NET and compile it. But the problem with that is that this process generates a new assembly that is then loaded in the running application domain of the .NET Virtual machine. Once an assembly is loaded it cannot be unloaded. So if you compile and load a lot of script you will consume your memory. There are some solutions for this memory consumption issues but they require other changes to your code.

Using other alternatives (unless you used a .NET implementation of VBScript which currently there isn’t a mature one) will require updating all your user scripts. Most of the new scripts are variants of the Javascript language.

Migration tools for VBScript

No. There aren’t a lot of tools for this task. But you can use http://slingfive.com/pages/code/scriptConverter/

Download the code from: http://blogs.artinsoft.net/public_img/ScriptingIssues.zip

I saw this with Francisco and this is one possible solution:

ASP Source

rs.Save Response, adPersistXML

rs is an ADODB.RecordSet variable, and its result is being written to the ASP Response

Wrong Migration

rs.Save(Response <-- The ASP.NET Response is not COM, ADODB.Recordset is a COM object, ADODB.PersistFormatEnum.adPersistXML);

So we cannot write directly to the ASP.NET response. We need a COM Stream object

Solution

ADODB.Stream s = new ADODB.Stream();

rs.Save(s, ADODB.PersistFormatEnum.adPersistXML);

Response.Write(s.ReadText(-1));

In this example an ADODB.Stream object is created, data is written into it and the it is flushed to the ASP.NET response

As you might know, recently Artinsoft released Aggiorno a smart tool for fixing pages for improving page structure and enforcing compliance with web standards in a friendly and unobtrusive way. Some remarks on the tool capabilities can be seen here. Some days ago I had the opportunity to use part of the framework on which the tool is built-in. Such a framework will be available in a forthcoming version to allow users developing extensions of the tool for user specific needs. Such needs may be variety of course; in our case we are interested in analyzing style sheets (CSS) and style affecting directives in order to help to predict and explain potential differences among browsers. The idea is to include a feature of such a nature in a future version of the tool. We notice that this intended tool though similar should be quite smarter than existing and quite nice tools like Firebug with respect to CSS understanding. Our idea was to implement it using the Aggiorno components; we are still working on it but it has been so far very easy to get a rich model of a page and its styles for our purposes. Our intention is to use a special form of temporal reasoning for explaining potentially conflictive behaviors at the CSS level. Using the framework we are able to build an automaton (actually a transition system) modeling the CSS behavior (intended and actual one). We incorporate dynamic (rendered, browser dependant) information in addition to the static one provided by the styles and the page under analysis. Dynamic information is available trough Aggiorno´s framework which is naturally quite nice to have it. Just to give you a first impression of the potential we show you a simple artificial example on how we model the styling information under our logic approach here. Our initial feelings on the potential of such a framework are quite encouraging, so we hope very soon this framework become public for similar or better developments according to own needs.

In VB6 you could create an OutOfProcess instance to execute some actions. But there is not a direct equivalent for that. However you can run a class in an another application domain to produce a similar effect that can be helpful in a couple of scenarios.

This example consists of two projects. One is a console application, and the other is a Class Library that holds a Class that we want to run like an "OutOfProcess" instance. In this scenario. The console application does not necessary know the type of the object before hand. This technique can be used for example for a Plugin or Addin implementation.

Code for Console Application

using System;

using System.Text;

using System.IO;

using System.Reflection;

namespace OutOfProcess

{

/// <summary>

/// This example shows how to create an object in an

/// OutOfProcess similar way.

/// In VB6 you were able to create an ActiveX-EXE, so you could create

/// objects that execute in their own process space.

/// In some scenarios this can be achieved in .NET by creating

/// instances that run in their own

/// 'ApplicationDomain'.

/// This simple class shows how to do that.

/// Disclaimer: This is a quick and dirty implementation.

/// The idea is get some comments about it.

/// </summary>

class Program

{

delegate void ReportFunction(String message);

class RemoteTextWriter : TextWriter

{

ReportFunction report;

public RemoteTextWriter(ReportFunction report)

{

this.report = report;

}

public override Encoding Encoding

{

get

{

return new UnicodeEncoding(false, false);

}

}

public override void Flush()

{

//Nothing to do here

}

public override void Write(char value)

{

//ignore

}

public override void Write(string value)

{

report(value);

}

public override void WriteLine(string value)

{

report(value);

}

//This is very important. Specially if you have a long running process

// Remoting has a concept called Lifetime Management.

//This method makes your remoting objects Inmmortals

public override object InitializeLifetimeService()

{

return null;

}

}

static void ReportOut(String message)

{

Console.WriteLine("[stdout] " + message);

}

static void ReportError(String message)

{

ConsoleColor oldColor = Console.ForegroundColor;

Console.ForegroundColor = ConsoleColor.Red;

Console.WriteLine("[stderr] " + message);

Console.ForegroundColor = oldColor;

}

static void ExecuteAsOutOfProcess(String assemblyFilePath,String typeName)

{

RemoteTextWriter outWriter = new RemoteTextWriter(ReportOut);

RemoteTextWriter errorWriter = new RemoteTextWriter(ReportError);

//<-- This is my path, change it for your app

//Type superProcessType = AspUpgradeAssembly.GetType("OutOfProcessClass.SuperProcess");

AppDomain outofProcessDomain =

AppDomain.CreateDomain("outofprocess_test1",

AppDomain.CurrentDomain.Evidence,

AppDomain.CurrentDomain.BaseDirectory,

AppDomain.CurrentDomain.RelativeSearchPath,

AppDomain.CurrentDomain.ShadowCopyFiles);

//When the invoke member is called this event must return the assembly

AppDomain.CurrentDomain.AssemblyResolve += new ResolveEventHandler(outofProcessDomain_AssemblyResolve);

Object outofProcessObject =

outofProcessDomain.CreateInstanceFromAndUnwrap(

assemblyFilePath, typeName);

assemblyPath = assemblyFilePath;

outofProcessObject.

GetType().InvokeMember("SetOut",

BindingFlags.Public | BindingFlags.Instance | BindingFlags.InvokeMethod,

null, outofProcessObject, new object[] { outWriter });

outofProcessObject.

GetType().InvokeMember("SetError",

BindingFlags.Public | BindingFlags.Instance | BindingFlags.InvokeMethod,

null, outofProcessObject, new object[] { errorWriter });

outofProcessObject.

GetType().InvokeMember("Execute",

BindingFlags.Public | BindingFlags.Instance | BindingFlags.InvokeMethod,

null, outofProcessObject, null);

Console.ReadLine();

}

static void Main(string[] args)

{

string testAssemblyPath =

@"B:\OutOfProcess\OutOfProcess\OutOfProcessClasss\bin\Debug\OutOfProcessClasss.dll";

ExecuteAsOutOfProcess(testAssemblyPath, "OutOfProcessClass.SuperProcess");

}

static String assemblyPath = "";

static Assembly outofProcessDomain_AssemblyResolve(object sender, ResolveEventArgs args)

{

try

{

//We must load it to have the metadata and do reflection

return Assembly.LoadFrom(assemblyPath);

}

catch

{

return null;

}

}

}

}

Code for OutOfProcess Class

using System;

using System.Collections.Generic;

using System.Text;

namespace OutOfProcessClass

{

public class SuperProcess : MarshalByRefObject

{

public void SetOut(System.IO.TextWriter newOut)

{

Console.SetOut(newOut);

}

public void SetError(System.IO.TextWriter newError)

{

Console.SetError(newError);

}

public void Execute()

{

for (int i = 1; i < 5000; i++)

{

Console.WriteLine("running running running ");

if (i%100 == 0) Console.Error.Write("an error happened");

}

}

}

}

We found some machines that do not show the "Attach To Process" option.

This is very important for us, specially if you are testing the migration of an VB6 ActiveX EXE or ActiveX DLL to C#.

There is a bug reported by Microsoft http://support.microsoft.com/kb/929664

Just follow the Tool/Import Settings wizard to the end. Close and restart VS and the options will reapper.

Also you might find that the Configuration Manager is not available to switch between Release and Build for example.

To fix this problem just go to Tools -> Options -> Projects and Solutions -> General... make sure the option "Show advanced build configurations" is checked.